On August 1, the European Union took a significant step in regulating artificial intelligence (AI) with the implementation of the AI Act. This landmark legislation seeks to establish a framework dictating the ethical and practical limits of AI technologies within its member states. The impetus for such regulation stems from the realization that AI applications can introduce risks and ethical dilemmas, particularly in sensitive domains such as healthcare and employment. A team of researchers, including computer science professor Holger Hermanns from Saarland University and law expert Anne Lauber-Rönsberg from Dresden University of Technology, is examining this new landscape and its repercussions on software developers and programmers.

Questions from Programmers

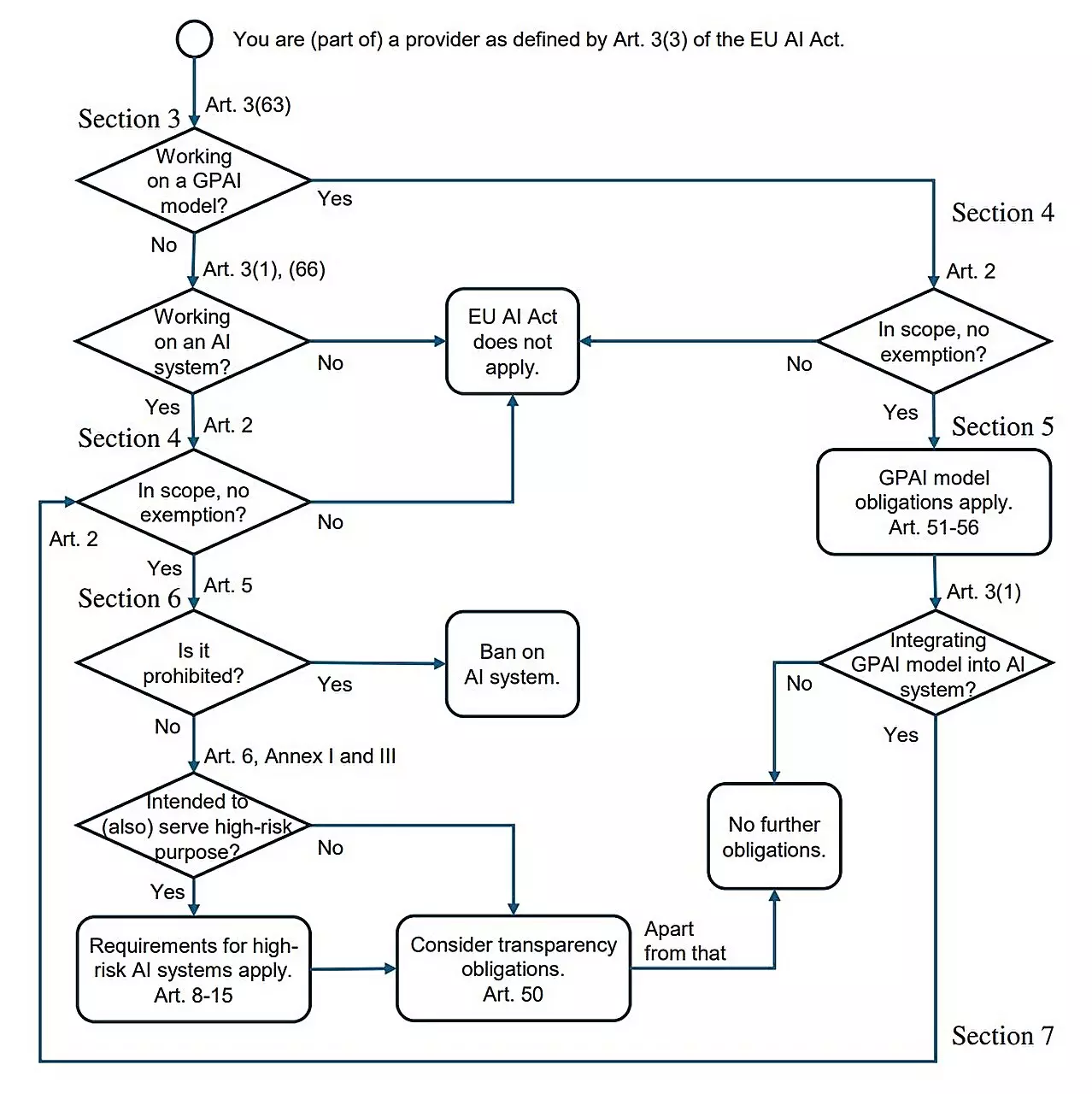

One pertinent inquiry has arisen among programmers amid the rollout of the AI Act: “What must I comprehend about this law?” The AI Act consists of 144 pages, a daunting read for most busy developers. To highlight this disconnect, Hermanns and his team have drafted a research paper titled “AI Act for the Working Programmer.” This document aims to bridge the gap between legislation and the everyday realities of software development, outlining the essential takeaways from the law without requiring an exhaustive review.

Programmers typically lack the luxury of dedicating hours to dissecting legal documents, leading to a knowledge deficit regarding compliance with the AI Act. Hermanns emphasizes that while many developers may continue their usual work without much alteration, those involved in producing high-risk AI systems must pay close attention to the nuances in the legislation.

According to the AI Act, the focus is primarily on systems classified as high-risk, which have greater implications for user safety and fairness. Examples of such systems include those that perform applicant tracking, credit rating estimation, or medical diagnostics. Under the AI Act, developers engaged in these sectors are subject to stringent rules designed to minimize adverse outcomes.

A significant responsibility falls upon programmers to ensure that their training data is both reliable and representative. Failure to account for biases in the data set could lead to discriminatory practices embedded in the AI model. When creating educational or medical software, developers must also maintain comprehensive logs that chart the AI’s decision-making processes, mimicking the functionality of a black box in aviation. This provision serves both an accountability function and a mechanism for improving AI systems over time, as it allows for error diagnosis and understanding of any issues that may arise.

Interestingly, the provisions of the AI Act are not applicable to all AI systems. For example, applications that simulate characters in video games or manage spam filtering are largely exempt from the extensive compliance measures mandated for high-risk systems. As such, the narrative presented by Hermanns and his colleagues indicates that a majority of programmers will likely experience minimal disruption to their workflows due to the categorization system outlined in the Act.

Still, the high-risk category remains central to the scope of the legislation. When these systems enter the market or become operational, developers must ensure full compliance, not only in terms of logs and training data but also in providing comprehensive documentation on the AI’s functioning. Proper oversight by users is essential, and developers must equip end-users with the managerial tools necessary for effective supervision.

Overall, the AI Act acts as a necessary regulatory framework poised to balance innovation with ethical responsibility. Hermanns notes that the law introduces significant constraints on high-risk AI systems, while the majority of AI applications remain unaffected. Noteworthy is the continued freedom of research and development in both public and private sectors, a flexibility critical for driving technological advancements without stifling creative exploration.

Moreover, the regulatory landscape posited by the AI Act does not place the EU at a disadvantage within the global context. Instead, many experts, including Hermanns, view this legislative effort as a progressive step forward in creating coherent standards for AI utility on an international scale.

As AI continues to permeate various facets of society, understanding and navigating these regulations will be imperative for developers. The AI Act is an important stride in addressing ethical concerns while fostering a responsible approach to AI advancement, ultimately shaping the future interaction between technology and society.