In recent years, speech emotion recognition (SER) technologies have gained remarkable traction, driven largely by advancements in deep learning methodologies. These systems are increasingly employed in diverse fields ranging from healthcare to customer service, aiming to interpret emotional states from spoken language. Machines capable of recognizing the subtleties of human emotions present an exciting opportunity for improving interaction and empathy in machine-human communication. However, with these advancements comes a significant challenge: the vulnerability of these deep learning models to adversarial attacks.

Adversarial attacks—manipulated inputs designed to deceive machine learning models—pose a serious risk to the integrity of SER systems. A recent study led by a team from the University of Milan scrutinized the ramifications of both white-box and black-box attacks on SER mechanisms across different languages and genders. The findings, published in the journal *Intelligent Computing*, reveal the alarming susceptibility of convolutional neural networks paired with long short-term memory (CNN-LSTM) architectures to these malicious interventions. The researchers employed a myriad of adversarial techniques—including the Fast Gradient Sign Method and the Carlini and Wagner attack—to evaluate model resilience under adversarial conditions.

The study found that all forms of adversarial engagement notably compromised the performance of SER models. The authors emphasized that such vulnerabilities could lead to “serious consequences.” This highlights the pressing need for developers and researchers to not only enhance the robustness of SER systems but also actively seek methods to counter overt malicious exploitation.

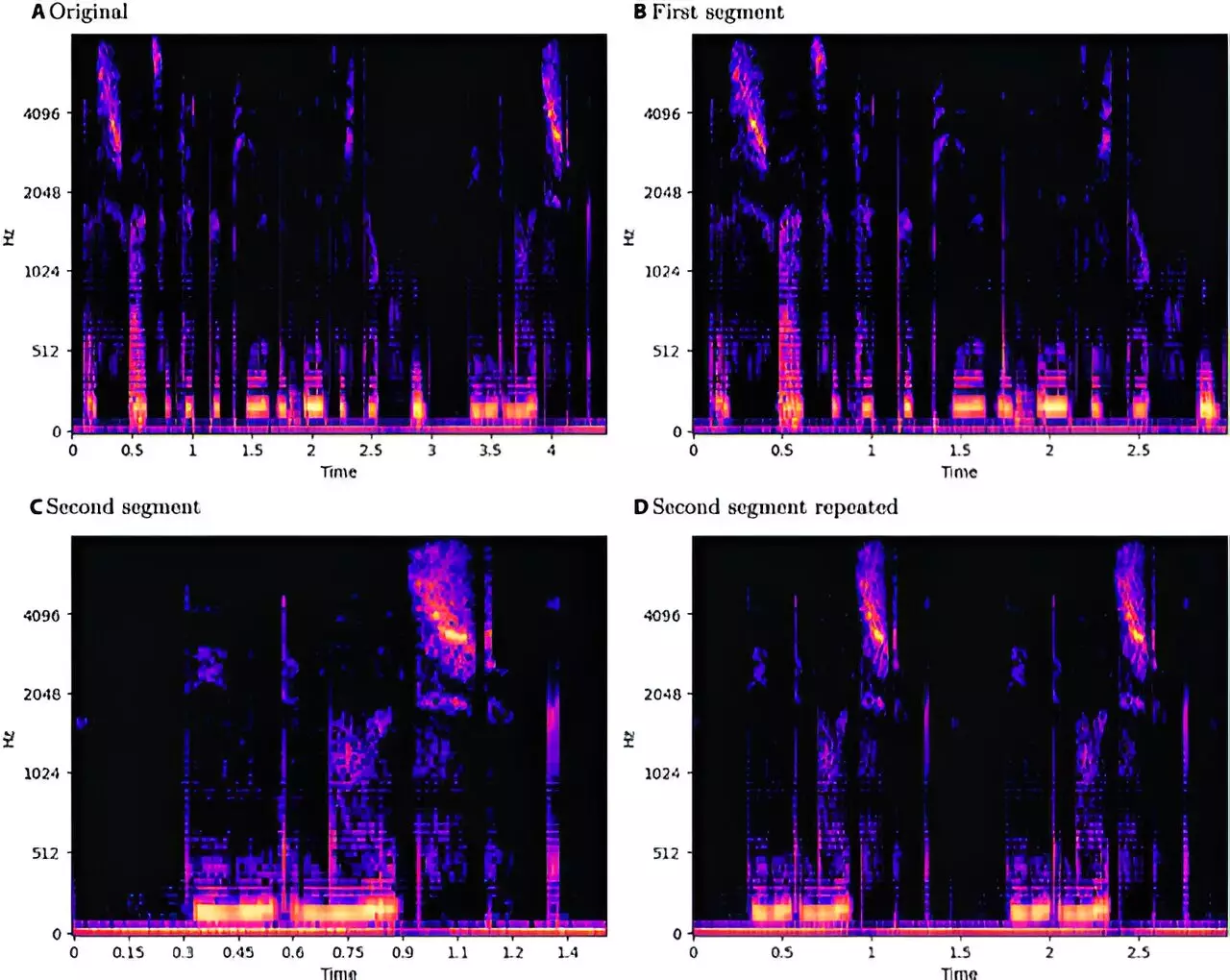

The investigative framework of the study was methodical, analyzing three datasets: EmoDB for German, EMOVO for Italian, and RAVDESS for English. Through this structured approach, the research team devised a standardized pipeline for sample processing, utilizing log-Mel spectrogram extraction techniques and augmenting data through pitch shifting and time stretching. This effort was aimed at maintaining consistency across various language samples while understanding the performance variances induced by adversarial methods.

Interestingly, despite the observed vulnerabilities, the study noted that black-box attacks sometimes outperformed white-box ones—an unsettling revelation that suggests attackers could achieve significant results without access to the inner workings of SER models. This raises questions about the defensive capabilities of existing systems and the need for enhanced transparency regarding method vulnerabilities.

A critical aspect of the research was its emphasis on the intersection of gender and language in understanding how adversarial attacks impacted SER performance. While only minor variations were noted in model performance across the examined languages, English appeared particularly exposed to adversarial interference, with Italian showing remarkable resilience. The analysis suggested a slight advantage for male speech samples in defending against disruptions, although the differences in accuracy and perturbation levels were minimal when juxtaposed with female samples.

Understanding these gender-based differences can inform how SER technologies are developed and tested, ensuring that they are not only effective across demographics but also reinforced against targeted attacks. The mere identification of these disparities contributes significantly to formulating strategies that address vulnerabilities in personalized contexts.

One of the salient points raised by the researchers pertains to the ethical considerations surrounding the disclosure of vulnerabilities in SER systems. In their review, they argue that withholding such findings could be more detrimental than sharing them, asserting that transparency fosters a mutual understanding between attackers and defenders, leading to fortified technological landscapes. Researchers suggest that by publicly acknowledging these weaknesses, the SER community can engage in a proactive discourse to strengthen existing models.

As SER technologies continue to evolve, the awareness and consideration of their vulnerabilities must keep pace. The research conducted by the University of Milan serves as a crucial reminder of the importance of vigilance in the face of rapidly developing adversarial tactics. Striking a balance between transparency and the protection of sensitive information will be paramount in paving the way for robust and trustworthy SER systems.